In this article

Key Takeaways

- Fog computing acts as a middle layer. It extends cloud computing to the network edge. This reduces delay and improves real-time processing

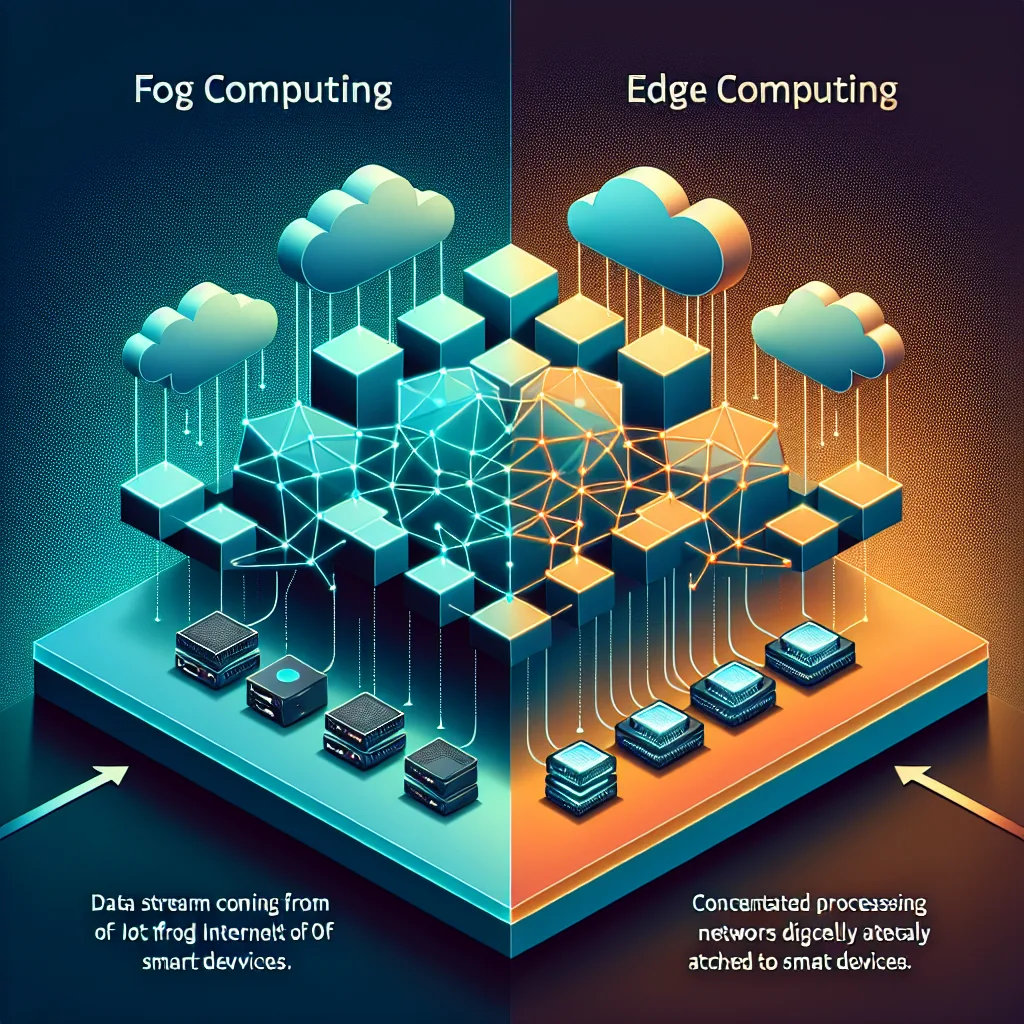

- Edge computing processes data directly on devices. Fog computing uses fog nodes placed between edge devices and cloud servers

- This computing setup helps IoT applications. It spreads data analysis across multiple layers instead of putting everything in the cloud

- Fog computing works well for low-delay needs. Examples include smart cities, self-driving cars, and factory automation

- AI and analytics benefit from fog computing. It processes data closer to the source while staying connected to cloud services

- The fog layer provides better security and bandwidth use than traditional cloud computing models

Fog computing bridges the gap between centralized cloud systems and distributed edge devices. This middle computing model meets the growing need for real-time data processing in IoT environments. It also keeps the scaling benefits of cloud computing. Organizations using smart devices and sensor networks need fog computing. It helps manage the huge amount of data created at the network edge.

Fog computing extends cloud computing closer to where data starts. This creates a more efficient distributed computing system. Traditional cloud computing processes all data in remote data centers. Edge computing handles everything on local devices. Fog computing creates smart processing points throughout the network.

- What Is Fog Computing?

- Fog Computing vs Edge Computing: Understanding the Differences

- Fog Computing Architecture and Components

- Fog Computing and AI: Enhancing Real-Time Analytics

- Fog Computing Use Cases and Applications

- Benefits and Challenges of Fog Computing

- Frequently Asked Questions

What Is Fog Computing?

Fog computing is a distributed computing setup. It extends cloud computing services to the network edge through middle nodes called fog nodes. These fog nodes work like mini data centers. They sit between IoT devices and cloud servers. This creates a layered computing model that improves data processing and reduces delay.

A fog node can be any computing device with processing power, storage, and network connection. Examples include industrial gateways, routers, and dedicated fog servers. This computing approach helps organizations process critical data locally. At the same time, it keeps connections to centralized cloud services for less urgent operations.

The fog computing model addresses three problems in modern IoT deployments. First, edge devices create massive amounts of data. Second, there's a need for real-time processing. Third, bandwidth is limited when connecting devices to the cloud. By processing data at middle points, fog computing reduces the load on both edge devices and cloud systems.

Key Components of Fog Computing

Fog computing systems have three main layers. The device layer includes all IoT sensors and smart devices that create data. The fog layer contains the fog nodes that provide middle processing abilities. The cloud layer houses traditional cloud servers and data centers for long-term storage and complex analytics.

Fog Computing vs Edge Computing: Understanding the Differences

People often use fog computing and edge computing as the same thing. But they represent different approaches to distributed processing. Edge computing processes data directly on edge devices or very close to them. Fog computing creates a network of middle processing nodes between the edge and cloud.

Edge computing typically puts processing abilities directly on or next to IoT devices. This approach reduces delay for applications that need immediate responses. But it can be limited by the processing power available at individual devices. Edge servers or gateways might handle data from multiple devices. But the processing stays local.

Fog computing creates a more distributed processing network. Multiple fog nodes can work together to handle complex analytics and AI workloads. These tasks exceed what individual edge devices can do. This computing setup provides more flexibility in how workloads are spread across the network.

When to Choose Fog vs Edge Computing

Edge computing works best for applications that need ultra-low delay. It's good when you can process with limited computing resources. Examples include simple sensor data filtering or basic automation responses. Fog computing is better when you need more processing power than edge devices can provide. But you still need faster responses than cloud computing allows.

The choice between edge computing and fog computing often depends on two things. First is the complexity of your analytics needs. Second is the available infrastructure. Many organizations use both approaches as part of a complete distributed computing strategy.

Fog Computing Architecture and Components

Fog computing architecture creates a layered network. It optimizes data flow between devices and cloud services. At the foundation, edge devices and sensors collect data from their environment. This data flows to nearby fog nodes. These can do initial processing, filtering, and analysis. Then they decide whether to handle the request locally or forward it to cloud servers.

Each fog node works as a micro data center. It has compute, storage, and networking abilities. These nodes can run AI algorithms and do real-time analytics. They can make independent decisions based on set rules. The distributed nature of this setup means that even if one node fails, others can keep working. This provides better strength than centralized systems.

The network setup in fog computing is designed to reduce delay while maximizing processing efficiency. Fog nodes are placed strategically to serve specific geographic areas or functional domains. This creates logical boundaries that help manage data flow and reduce unnecessary network traffic.

Fog Node Deployment Strategies

Organizations can deploy fog nodes using various strategies. This depends on their needs. Some put fog nodes at network gathering points, such as cellular base stations or Wi-Fi access points. Others place dedicated fog servers at strategic locations like manufacturing facilities or smart city infrastructure hubs.

Fog Computing and AI: Enhancing Real-Time Analytics

Fog computing provides an ideal platform for deploying AI applications. These applications need real-time decision-making. By positioning AI processing abilities at fog nodes, organizations can analyze data as it's created. This avoids the delays linked with cloud computing. This ability is crucial for applications like self-driving cars, industrial automation, and smart grid management.

AI algorithms running on fog nodes can do complex pattern recognition. They can also do predictive analytics and spot unusual patterns in real-time. These abilities enable immediate responses to changing conditions. This improves safety and efficiency across various industries. The fog layer can also pre-process data for more complex AI models running in the cloud. This creates a multi-tier analytics setup.

Machine learning models deployed in fog computing environments benefit from lower delay and improved data privacy. Sensitive data can be processed locally within fog nodes. Only combined results or alerts are sent to cloud services. This approach reduces bandwidth usage and keeps better control over confidential information.

AI Workload Distribution

Fog computing enables smart workload distribution for AI applications. Simple AI tasks like image recognition or sensor data classification can run on fog nodes. More complex models needing extensive processing power can run in the cloud. This mixed approach optimizes both performance and resource use.

Fog Computing Use Cases and Applications

Smart cities represent one of the most compelling use cases for fog computing. Traffic management systems can use fog nodes to analyze data from multiple sensors and cameras. They adjust traffic light timing and routing recommendations in real-time. This local processing reduces the delay that would happen if all decisions needed communication with distant cloud servers.

Industrial IoT applications benefit significantly from fog computing's ability to process data at middle points. Manufacturing facilities can deploy fog nodes to monitor equipment health and predict maintenance needs. They can also optimize production processes. These applications need immediate responses to changing conditions. They perform better with fog computing than traditional cloud computing approaches.

Healthcare monitoring systems use fog computing to process patient data from wearable devices and medical sensors. Critical alerts can be created locally at fog nodes. This ensures that emergency situations get immediate attention even if cloud connection is temporarily unavailable.

Autonomous Vehicle Networks

Self-driving cars rely on fog computing infrastructure to share real-time traffic and road condition data. Fog nodes positioned along roadways can gather information from multiple vehicles. They process it with AI algorithms and send relevant updates back to vehicles in the area. This distributed processing model supports the low-delay requirements essential for vehicle safety systems.

Benefits and Challenges of Fog Computing

Fog computing offers several advantages over traditional cloud computing models. Reduced delay is perhaps the most significant benefit. Processing data closer to its source eliminates round-trip delays to distant data centers. This improvement is critical for applications that need real-time responses.

Bandwidth optimization is another key advantage. By processing and filtering data at fog nodes, organizations can reduce the amount of data sent to cloud servers. This efficiency is particularly valuable in environments with limited network connection or high data volumes from multiple sensors.

Enhanced reliability comes from the distributed nature of fog computing. If connection to cloud services is interrupted, fog nodes can continue processing data and making decisions on their own. This ability ensures that critical systems remain operational even during network outages.

Implementation Challenges

Managing a distributed fog computing infrastructure presents complexity challenges. Organizations must coordinate updates, security patches, and configuration changes across multiple fog nodes. This distributed management needs sophisticated orchestration tools and processes.

Security considerations become more complex in fog computing environments. Each fog node represents a potential attack point that must be secured and monitored. Implementing consistent security policies across distributed fog nodes while maintaining performance can be challenging.

Computing Architecture Hierarchy

The differences between cloud computing and fog computing become clear when looking at their positions and processing abilities. Cloud computing centralizes data processing in remote data centers. Fog computing distributes processing power closer to data sources. This creates a middle layer that bridges edge devices and the cloud. This layered approach allows organizations to optimize their computing solutions. They can base decisions on delay needs, bandwidth limits, and security requirements.

Edge and fog computing work together to create a complete distributed computing framework. This addresses the limitations of traditional cloud-only approaches. Edge computing technology processes data directly at or near the source devices. Fog computing gathers and manages data from multiple edge nodes. Then it forwards relevant information to cloud infrastructure. The difference between edge computing and fog computing lies mainly in their scope and processing abilities. Fog nodes handle more complex analytics and coordination tasks across multiple edge devices.

Security Implications in Distributed Computing

Edge computing security presents unique challenges. These differ significantly from traditional cloud security models. They need specialized approaches to protect distributed infrastructure. Security and privacy concerns arise when data processing happens across multiple fog nodes, edge devices and the cloud. This creates additional attack points and data exposure areas. Security concerns include device authentication, data encryption in transit, and maintaining consistent security policies across the entire computing hierarchy.

Internet of things deployments particularly benefit from fog computing's security abilities. It provides local threat detection and response mechanisms. These complement cloud-based security services. What's the difference between centralized and distributed security approaches becomes critical when designing fog computing setups. These must protect sensitive industrial data while maintaining operational efficiency. Edge vs cloud security models need different strategies. Fog computing offers a balanced approach that combines local security enforcement with centralized policy management.

Understanding how fog and edge computing differ from cloud computing helps organizations make informed decisions. They can better plan their infrastructure investments and security strategies. Computing vs cloud computing discussions often overlook the detailed benefits that fog computing provides. This is particularly true in scenarios needing real-time processing with robust security controls. The edge computing vs fog computing debate centers on finding the optimal balance. This includes processing power, delay, and security needs for specific industrial applications.

Fog computing emerged as organizations needed to address the growing gap between edge devices and centralized cloud infrastructure. While edge computing brings processing closer to data sources, fog computing creates a middle layer. This provides additional compute and storage resources between these endpoints. This distributed approach offers improved security. It reduces the amount of sensitive data that needs to be sent to the cloud for processing.

Understanding what's the difference between fog and edge computing requires looking at their positions and abilities. Edge computing operates at the network's edge, directly connected to IoT devices and sensors. Fog computing extends this concept by creating a mesh of interconnected nodes. These can handle more complex processing and storage needs. The growth of IoT has speeded up adoption of both approaches. Traditional cloud-only setups struggle with delay and bandwidth limits.

Security Advantages in Fog Architecture

Fog computing security benefits from its decentralized computing model. This distributes security management across multiple nodes rather than relying on a single point of control. This setup provides enhanced protection against unauthorized access. It implements security features at multiple layers of the infrastructure. Organizations can maintain better control over sensitive data and the cloud integration points. This reduces exposure to potential breaches.

The distributed nature of fog networks creates multiple security checkpoints. These can filter and process data before it reaches centralized systems. Each fog node can implement its own security protocols while maintaining connection to broader network security management systems. This layered approach strengthens overall system strength and provides multiple opportunities to detect and respond to security threats.

Resource Management and Service Delivery

Fog computing and edge computing are two complementary approaches. They address different aspects of distributed processing needs. Fog nodes typically offer more substantial computing power than edge devices. This enables them to handle complex analysis and storage tasks that exceed edge abilities. This positioning allows fog infrastructure to support software as a service deployments. These need more resources than edge devices can provide.

Modern fog implementations integrate various storage services and processing abilities to meet diverse application needs. Organizations can deploy solutions that distribute workloads across edge, fog, and cloud tiers. This is based on delay needs, data sensitivity, and resource availability. Technologies like fog computing enable this flexible resource allocation while maintaining efficient connection between all system components.

Organizations implementing distributed computing setups often struggle to understand the differences between fog, edge, and cloud computing layers. Fog computing bridges the gap by providing middle processing abilities. These complement both edge devices and centralized cloud infrastructure. This layered approach enables more efficient data flow management across the entire computing spectrum.

When deploying IoT solutions at scale, companies must carefully sequence their fog and edge computing in order to maximize system performance. The fog layer processes time-sensitive data locally. It routes less critical information to cloud servers for deep analytics. This distributed processing model reduces bandwidth costs and improves response times for mission-critical applications.

Architectural Considerations for Fog Implementation

Fog nodes typically consist of industrial gateways, routers, and specialized computing appliances. These are positioned strategically throughout the network setup. These devices gather data from multiple edge sensors while providing local processing, storage, and networking abilities. The fog layer creates a distributed computing fabric that extends cloud services closer to data sources.

Manufacturing environments particularly benefit from fog computing's ability to handle real-time control loops and predictive maintenance algorithms. Factory floor sensors create massive data volumes that need immediate processing. This prevents equipment failures or quality issues. Fog nodes filter and analyze this data stream. They send only actionable insights to enterprise systems while maintaining local operational control.

Frequently Asked Questions

What's the difference between fog computing and edge computing?

Fog computing creates middle processing nodes between edge devices and cloud servers. Edge computing processes data directly on or very close to the devices themselves. Fog computing provides more processing power and coordination abilities than edge computing. This makes it suitable for complex analytics and AI workloads.

How does fog computing reduce latency compared to cloud computing?

Fog computing reduces delay by processing data at fog nodes positioned closer to edge devices. This eliminates the need to send data to distant cloud servers for every operation. This closeness significantly decreases the time needed for real-time analytics and decision-making.

Can fog computing work without cloud connectivity?

Yes, fog nodes can operate independently when cloud connection is unavailable. This independent ability allows fog computing systems to continue processing data and making decisions locally. This provides better reliability than systems that depend entirely on cloud services.

What types of applications benefit most from fog computing?

Applications that need real-time processing benefit most from fog computing. They also need more computing resources than individual edge devices can provide. This includes smart cities infrastructure, industrial IoT systems, self-driving vehicle networks, and healthcare monitoring applications.

How does fog computing handle data security and privacy?

Fog computing can enhance data security by processing sensitive information locally at fog nodes. This is instead of transmitting it to cloud servers. This approach keeps critical data closer to its source while still enabling coordination between multiple nodes when necessary.

What hardware is required to implement fog computing?

Fog computing needs fog nodes with sufficient processing power, storage, and network connection. These handle local analytics and AI workloads. These can range from industrial gateways and edge servers to dedicated fog computing appliances. The choice depends on the specific use case needs.

What's the difference between fog computing and traditional cloud computing?

Fog computing is different from cloud computing in its distributed setup and closeness to data sources. It processes information at middle nodes rather than centralized data centers. This approach reduces delay and bandwidth usage while providing better support for real-time applications. Cloud and fog computing work together as complementary technologies. Fog handles time-sensitive processing and cloud manages long-term storage and complex analytics.

How do edge and fog computing address security concerns in IoT deployments?

Edge and fog computing enhance security by processing sensitive data locally. This is rather than transmitting everything to remote cloud servers. This reduces exposure during data transit. These computing solutions implement distributed security policies that can respond to threats in real-time without relying on cloud connection. The differences between cloud, fog, and edge security models allow organizations to create layered defense strategies. These protect industrial systems at multiple levels.

What are the key differences between cloud, fog, and edge computing architectures?

The differences between cloud computing, fog computing, and edge computing relate to their processing locations, delay characteristics, and resource abilities within the computing hierarchy. Edge computing occurs directly on devices or nearby gateways. Fog computing gathers processing across multiple edge nodes. Cloud computing centralizes resources in remote data centers. Understanding these setup differences helps organizations select appropriate computing solutions for their specific delay, bandwidth, and processing needs.

How does fog computing handle security and privacy concerns in industrial environments?

Fog computing addresses security and privacy concerns by implementing local data processing and encryption before transmitting information to cloud services. This distributed approach minimizes sensitive data exposure while maintaining compliance with industry regulations and privacy requirements. The computing solutions provided by fog setup enable organizations to keep critical data within their operational boundaries. They still benefit from cloud-based analytics and storage abilities.

What computing power do fog nodes typically provide compared to edge devices?

Fog nodes deliver significantly more computing power than typical edge devices. They often feature multi-core processors, substantial memory, and dedicated storage services. This enhanced ability allows fog infrastructure to handle complex processing and storage needs that exceed edge device limitations. They still maintain lower delay than cloud-based solutions.

How does fog computing security differ from traditional cloud security approaches?

Fog computing security implements a distributed model. Security management occurs across multiple network layers rather than centralizing all protection in the cloud. This approach provides improved security by creating multiple checkpoints. These can detect unauthorized access attempts and process security policies closer to data sources before information is sent to the cloud.

What role does fog computing play in the growth of IoT deployments?

The growth of IoT has driven fog computing adoption as organizations need middle processing layers. These handle massive data volumes from connected devices. Fog computing enables efficient analysis and storage of IoT data while reducing the bandwidth and delay issues. These are associated with sending all sensor data directly to centralized data and the cloud infrastructure.

How do fog computing and edge computing work together in modern architectures?

Edge computing brings immediate processing abilities to device locations. Fog computing extends this with additional compute and storage resources at middle network points. This layered approach allows organizations to distribute workloads effectively. Edge handles real-time responses and fog manages more complex analysis before final processing like fog-to-cloud integration occurs.

How do organizations determine the optimal fog computing architecture?

Companies must analyze their data processing needs, network setup, and delay limits to design effective fog implementations. The setup should balance local processing abilities with cloud connection needs. Organizations typically deploy fog nodes at strategic network points where they can serve multiple edge devices while maintaining reliable cloud connections.

What hardware requirements support fog computing deployments?

Fog computing nodes need sufficient processing power, memory, and storage to handle local analytics and data gathering tasks. Industrial-grade hardware with redundant networking abilities ensures reliable operation in harsh environments. These systems must also support various communication protocols to interface with diverse edge devices and cloud platforms.

How does fog computing impact network bandwidth utilization?

Fog computing significantly reduces bandwidth consumption by processing and filtering data before transmission to cloud servers. Local analytics abilities eliminate the need to send raw sensor data streams across wide area networks. This approach optimizes network resources while maintaining comprehensive data visibility for enterprise applications.

Fog computing represents a shift in how organizations approach distributed computing. By creating strategic processing points throughout the network, fog computing enables real-time analytics and AI applications. It maintains the scaling benefits of cloud computing. As IoT deployments continue to grow, fog computing will become increasingly important. It will help manage the complexity and performance needs of modern connected systems. Organizations planning their M2M system architecture should carefully consider how fog computing can enhance their data processing abilities and improve overall system performance.

Related Articles

Digital Twins and M2M: Creating Virtual Replicas of Physical Assets

How to Build an M2M Data Pipeline: From Sensor to Dashboard

Building an IoT data pipeline for M2M communication requires establishing a strong streaming pipeline architecture. This forms the foundation of your en...

Multi-Protocol Gateways for M2M Communication

Industrial automation leaders like Mitsubishi and Omron have embraced multi-protocol gateway technology. They use it to bridge legacy systems with moder...